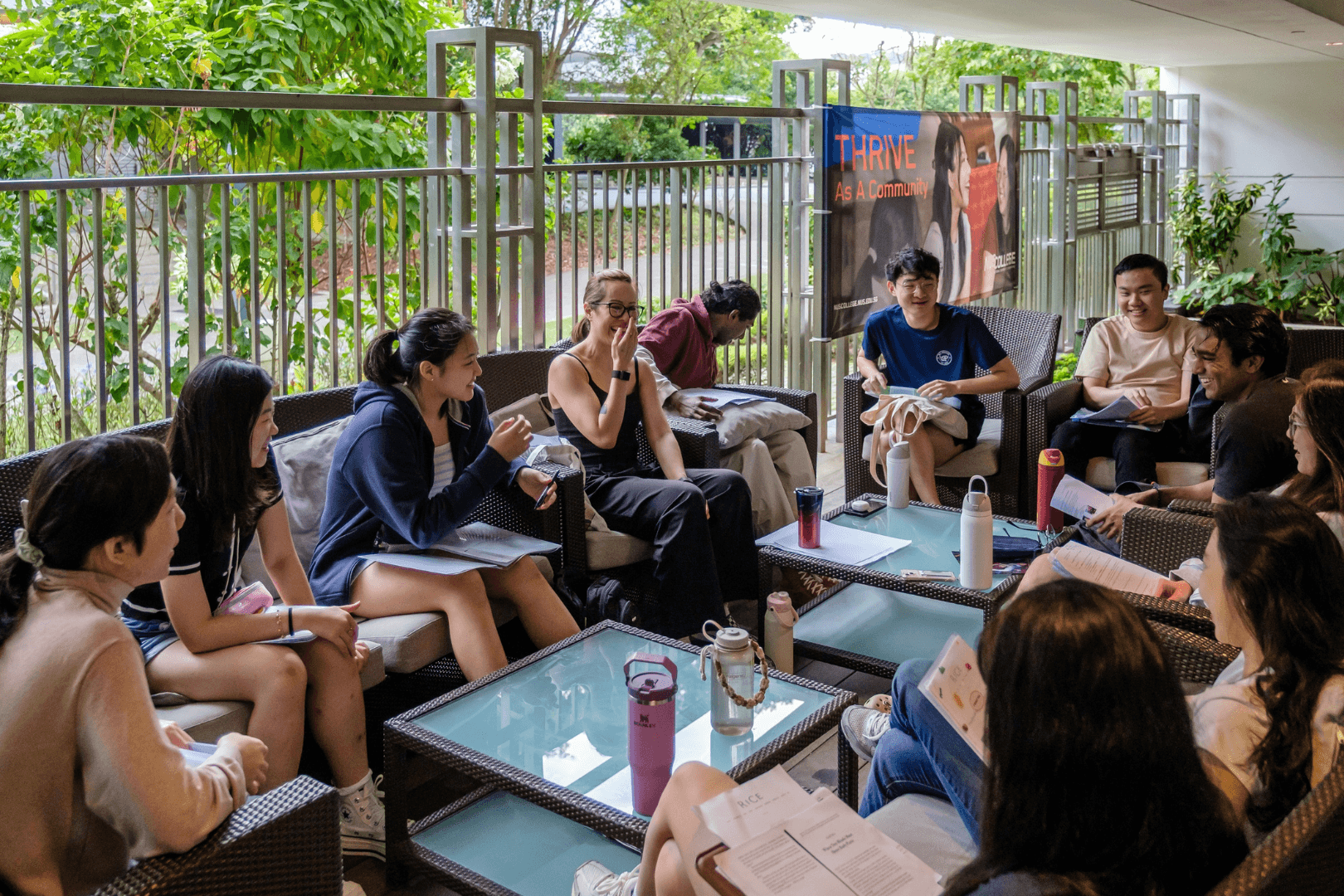

At NUS College, we recently ran something called Touch Grass Week: a voluntary experiment in stepping back from screens and returning, where feasible, to discussion, physical texts, handwritten notes, and the discipline of being present. Some classes moved outdoors. Others relied on printed materials rather than slides. Students also took part in a 24-hour Zero Tech Challenge.

That may sound like a very undergraduate exercise. It is not.

The problem Touch Grass Week named is one most adults already recognise, even if they have stopped trying to solve it. For most of us, technology is no longer something we use at particular moments. It is the atmosphere in which we live. The first thing many people do in the morning is look at a screen. The last thing they do at night is the same. In between, every idle moment is available for colonisation: messages, notifications, news, short videos, algorithmic recommendations, and now AI-generated prompts designed to keep us engaged a little longer.

The real issue is not screens. It is the difference between technology as a tool and technology as a habitat. Tools serve purposes we define. Habitats shape behaviour even when we are not paying attention. A hammer sits in the drawer until you need it. A social media feed does not wait — it reaches for you. Much of modern life is organised around the assumption that convenience is always good and friction is always bad. But some friction is valuable. It creates room for reflection. It slows impulsive responses. It forces us to ask whether an action is necessary rather than merely easy.

We learned this lesson badly with social media. The mistake was not simply that regulation came late — though it did. It was that we asked too few basic questions at the outset. What habits would this technology reward? What forms of dependence would it create? What would happen when systems optimised for engagement became the default infrastructure of everyday life? We now know the answers, and they are not flattering. Platforms were not built to cultivate wisdom, patience, or genuine connection. They were built to capture and retain attention. Once you understand that, the downstream effects make a great deal more sense.

With AI, we still have time to ask those questions earlier — but only just. AI is moving fast, and not only as a productivity tool. A study presented this year examined the use of AI chatbots for emotional support and companionship in Singapore. That is a significant development. AI will not only help us draft emails, summarise documents, or plan holidays. It is beginning to mediate how people think, decide, and relate to one another. The question worth pressing is: what kind of cognitive habitat does that create?

There is a version of this that is benign — AI as a capable assistant that frees human attention for more important things. But there is another version, already visible, in which AI quietly absorbs the tasks through which we do our thinking. Writing a first draft is not just a way of producing text. It is a way of discovering what one thinks. Reading a document carefully is not just information transfer. It is the exercise of judgment. When we outsource these activities too readily, we do not save time so much as we trade away the mental activity that makes us competent. The risk is not that AI makes us lazy. It is that it makes us passive — consumers of conclusions rather than producers of thought.

This is not an abstract policy concern for alumni. It is a professional and personal one. Many of us work in environments where responsiveness is rewarded, where every message feels urgent, and where the boundary between work and life has become genuinely porous. We also inhabit families and friendships increasingly punctuated by partial attention. The challenge is not to renounce technology but to recover agency within it.

That means small acts of design rather than grand gestures of abstinence. Hold some meetings device-free. Read one substantial thing each day on paper. Do not outsource every first draft to AI. Protect one conversation a day from the presence of a phone. Refuse the assumption that every spare moment must be filled.

None of this is nostalgic. It is preparatory. In a world of increasingly persuasive technologies, the ability to direct one’s own attention will become a more important form of freedom — and, for knowledge workers especially, a more important professional asset.

That, in the end, is what Touch Grass Week was about. Not rejecting the future, but rehearsing how to live in it well. A university should help students learn to use powerful tools. It should also help them decide when not to. The same is true for the rest of us. To touch grass is not to flee modern life. It is to insist that technology remains a servant, not becomes a master — and to practise that insistence before it becomes too hard.

Professor Chesterman is David Marshall Professor of Law and Vice Provost (Educational Innovation) at the National University of Singapore, where he is also the founding Dean of NUS College. He serves as AI Governance and Policy Lead at the NUS AI Institute and Editor of the Asian Journal of International Law.

-

From Construction to Conservation: How Daphne Ong is Reconnecting People with Nature Through Wild SpaceAfter nearly two decades in the construction industry, NUS alumna Ms Daphne Ong ...

From Construction to Conservation: How Daphne Ong is Reconnecting People with Nature Through Wild SpaceAfter nearly two decades in the construction industry, NUS alumna Ms Daphne Ong ... -

From Cancer Drugs to Hair Dyes: The Science of Making Molecules BehaveFrom powerful drugs to everyday products, Professor Giorgia Pastorin’s work is...

From Cancer Drugs to Hair Dyes: The Science of Making Molecules BehaveFrom powerful drugs to everyday products, Professor Giorgia Pastorin’s work is... -

Opening Doors, One Invention at a TimeFrom AI headsets to fingertip readers, Assoc Prof Suranga Nanayakkara (Engineeri...

Opening Doors, One Invention at a TimeFrom AI headsets to fingertip readers, Assoc Prof Suranga Nanayakkara (Engineeri...